Open Source Models¶

The Question¶

I think this might bug a lot of people: what about the accuracy of open-source models? Are they really that accurate compared to other proprietary models like Claude, ChatGPT, etc.?

The honest answer is no, but! It doesn't mean they're useless. They perform significantly better than the models you use on a daily basis, what do I mean? well for example if you have Claude Code, you don't use the Claude Opus max version for all of your tasks right? you use the Sonnet or lighter version most of the time, and some of the open source models do actually beat them in benchmarks.

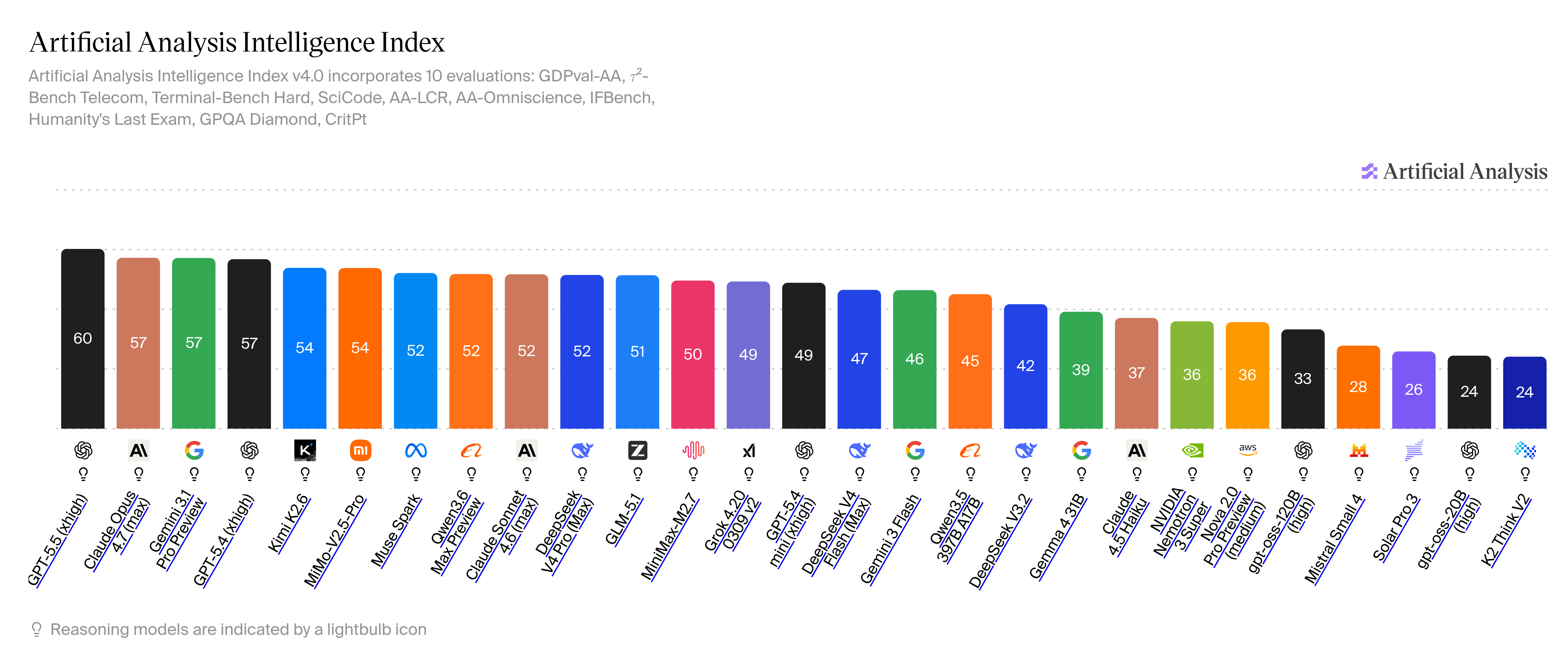

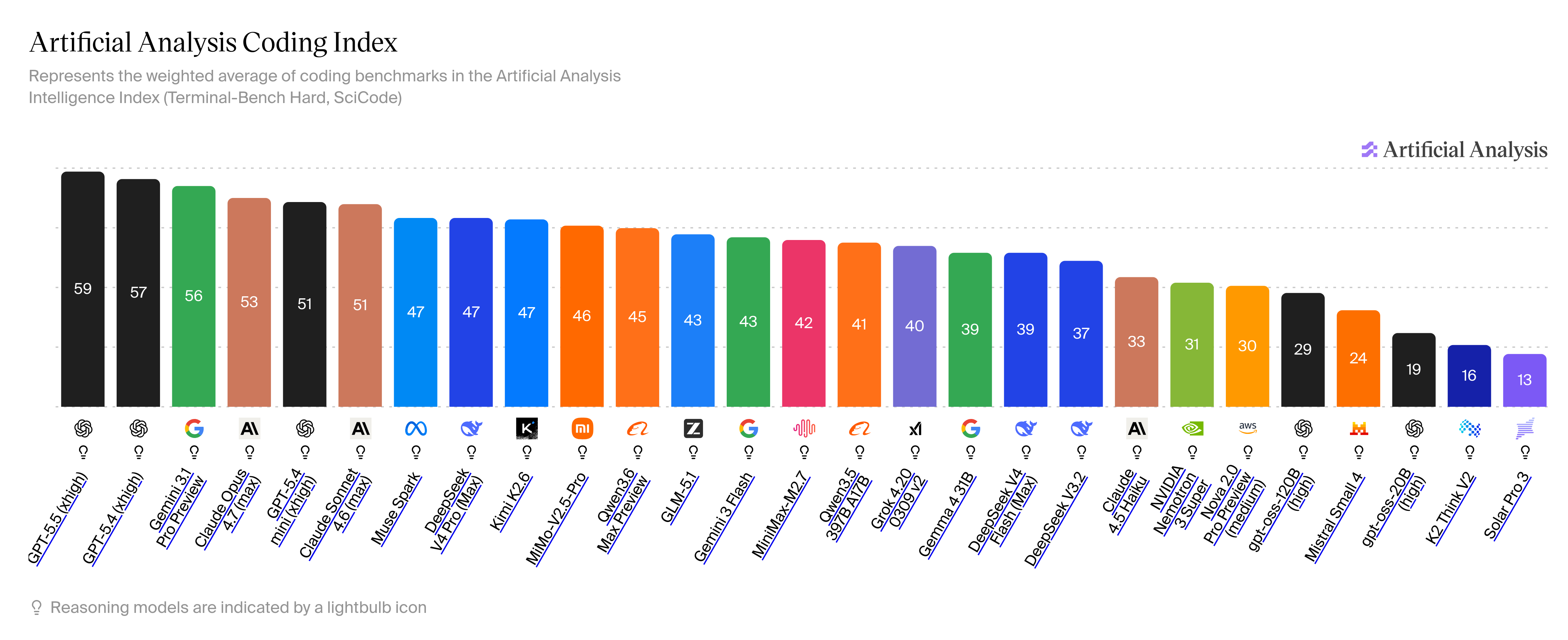

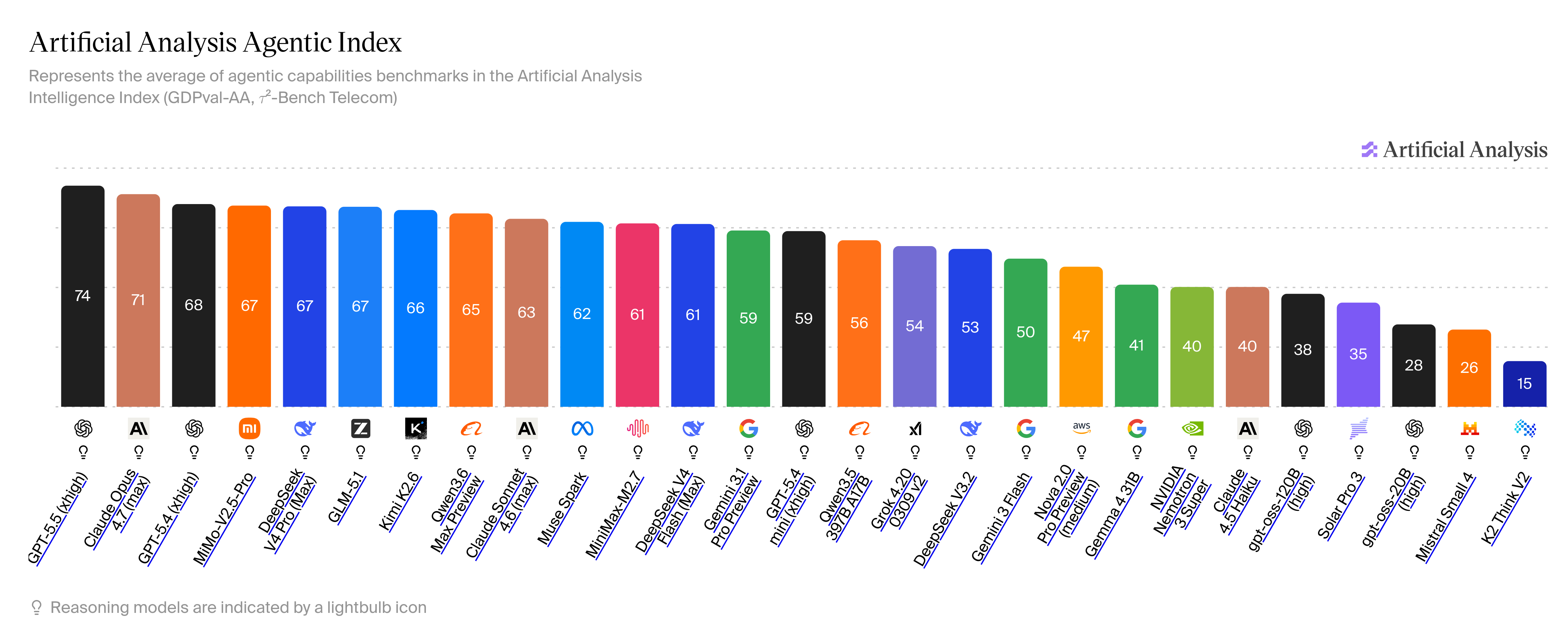

These are some of the benchmarks as per the Artificial Analysis on 29th April 2026

Intelligence Index

Coding Index

Agentic Index

As you can see from the benchmarks that some open source models like Kimi K2.6, GLM 5.1, MiMo-V2.5 Pro, Qwen3.6 Max and MiniMax-M2.7 are on par with or exceed day-to-day proprietary models.

Why To Use Open Models¶

This is also a common question: why should we use open models? Well! Open-source models are cheap compared to their closed-source alternatives and that solely because they're "open", means anyone can download and host these models so the overall cost using the models automatically becomes less.

One more thing that might attract you (that attracted me) is to see that how much they're capable compare to their competitors like Claude, Gemini or ChatGPT, the research they had done to make a models more cost efficient but also effective is interesting to look at, I will like to add some of the articles regarding the same to read

- DeepSeek-Coder-V2: Breaking the Barrier of Closed-Source Models in Code Intelligence

- Welcome Gemma 4: Frontier multimodal intelligence on device

- DeepSeek-V2: A Strong, Economical, and Efficient Mixture-of-Experts Language Model

- DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

- ATTENTION RESIDUALS

How To Use Open Models¶

Many of the best open source models are available in the [[Nvidia/Introduction|NVIDIA NIM]], you can use it directly from there but there are some constraint to keep in mind while using those models

- You need to be as specific as possible, the reason for that is, they don't generally have any "pre-uploaded" system prompts unlike other providers.

- You need to identify that when to use which models, these are some models that will give consistent output:

MiniMax Family (Excellent for coding)¶

- MiniMax-M2.7

- MiniMax-M2.5

Kimi Family (Excellent for coding/ general tasks)¶

- Kimi K2.7

- Kimi K2.5

GLM Family (Excellent for coding/ general tasks)¶

- GLM 5.1

- GLM 5

Other Good Models¶

- Qwen 3.6

- DeepSeek v4.0

- Google Gemma 4 31B IT